SEO is the best way to gain free organic traffic to your website and knowing how to index websites in Google console quickly will help you increase SEO rankings. To get ranked in Google, each page of your website needs to be crawled and indexed by Google and all errors need to be resolved.

Recently, Google released a new recommendation on how to index websites in Google console, they suggested using HTML when uploading content to your website as this will speed up your pages being indexed in Google console.

This is highly useful and efficient for websites that are frequently publishing or updating content. Google processes crawling and indexing by completing two passes. In the first pass, it inspects the HTML only. Then after a period of time, it looks at the entire website in the second pass.

Because each website is different and the Google algorithm is constantly being updated, there is no set time in between first pass and second. It can take days or even weeks for the process to be completed. If your website is heavy with JavaScript it may be the reason why it is taking weeks for your content to be indexed.

It is ideal for you to understand how to index websites in Google and how setting up your website in HTML can help as the Googlebot will be able to see all of the main content during the first pass. The Google bot won’t crawl and then index a whole page during the first pass due to it needing processing power and memory resources. As big as Google may be, its resources are unfortunately not infinite. So this means that when using JavaScript for creating pages, it needs to make room for additional resources to be able to render the content.

Below we will outline how to index websites in Google Console, how to block crawlers getting to pages with sensitive information, backlinking to increase the speed of getting your website indexed and other ways you can rank higher on Google.

How to index websites in Google Console

Google search console, previously known as webmaster tools, is where you will need to go to index your site and maintain your search rankings. It’s important to know how to index websites in Google console properly as it allows you to submit your site to be crawled, monitor your websites search results and make sure Google has access to your content.

Before you start this process ensure that your webpages are set up using HTML, to help speed up the process of getting indexed. If you need help from professionals to get your pages indexed faster, contact Whitehat Agency.

Set up account

- First, sign in to search console and go to the home page.

- Enter your website URL in the Add Property box and then click the Add Property button

Verify Website

- Use your Google analytics account to verify by simply clicking the Verify button at the bottom of the Recommended Method tab.

- Once verified click continue

Add sitemap to website

- Go to Crawl in the left hand menu, click Sitemaps from the left hand menu.

- Click the Add\Test Sitemap button in the top right hand corner and add the address for your websites sitemap, click test. Once it says ‘test complete’, view your test results for any errors.

- If errors are cleared, add sitemap address to Add/Test Sitemap and instead of clicking test, click submit. Your sitemap has then been added to Google.

Google Website Crawl

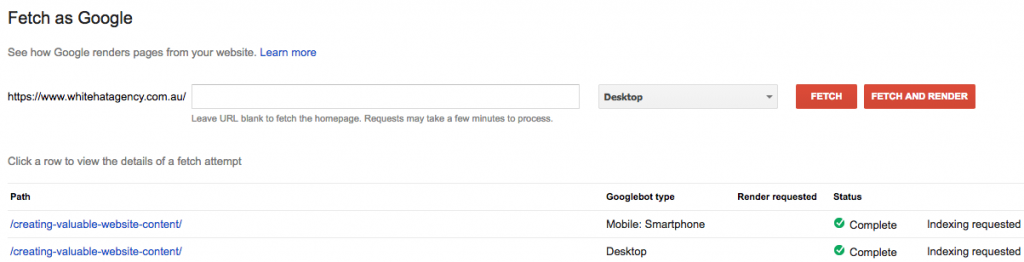

- Go to crawl in left hand menu, select Fetch as Google.

- Leave text box blank and select Fetch and Render.

- Once it is finished loading, click on Submit to Index.

- Click on I’m not a robot

- Select Crawl this URL and Its Direct Links and click go.

Once loaded, you will notice that your URL has been submitted to be indexed, this can take time to be processed. Once completed you will start receiving access to analytics for your website traffic and performance.

Index new content

- Go to crawl in left hand menu, select Fetch as Google.

- Enter page link text that comes after the >/ and select Fetch and Render.

- Once it is finished loading, click on Submit to Index.

- Click on I’m not a robot

- Select Crawl this URL and Its Direct Links and click go.

Block crawlers from pages with sensitive information

For pages with sensitive information, you need to block Google from indexing these pages. These pages include your RSS feeds, private data and login areas. To block access you need to create a robots.txt file so you can stop all search engines from viewing web pages that you want to keep private.

Backlinking to increase indexing speed

Backlinks can help improve the speed of your website being indexed as backlinks tell Google that your website is not only a trusted website but is also sharing high quality content. This helps not only to index your website faster but also improves your SEO score.

For those of you who don’t know what a backlink is, a backlink is when you have a hyperlink in a webpage that links to your website page. Backlinks need to be relevant and from high quality websites. It’s better to have fewer links and get backlinks from a trusted and relevant site than to get backlinked many times from a low quality website.

Other SEO advice

To build further trust with Google and therefore rank higher, you need to be publishing high quality content on your website. New updates to Google’s algorithms means blogs and pages have to be of a much higher quality than before. Gone are the days when you would find one keyword and jam pack a page without much regard to research or quality. Now you must create blogs of at least 1000 words, have a trusted author, relevant imagery and the content has to give the users more value. If the keyword is asking questions, then you must answer it in as much detail as possible.

Your website ranking can be affected if Google’s quality raters determines that your pages content is insufficient and does not feature any expertise or professional advice that can be trusted, the website or the author has a negative reputation, the title is irrelevant to the topic within the body text or the page promotes its own adverts too much throughout the blog.

Keywords are still important in SEO. When you are looking for keywords to target you want to be targeting keywords that have a high search volume but low competition for your country. Long-tail keywords that are questions tend to have less competition and can sometimes get your blog into ‘Featured Snippets’ which gets your article featured at the top of that keyword search. This is an ideal spot to be as anyone who searches that keyword question will see and read your article first.

When it comes to SEO, there can be hundreds of opportunities where you can improve your website. Getting advice from a trusted SEO agency can help you generate more traffic to your website and achieve increased sales.

If you would like to gain higher rankings in Google or want to know more information on how to index websites in Google, contact Whitehat Agency today.

Recent Posts

Get your FREE 30 minute Digital Marketing Consultation.

Learn how you can grow your business by unlocking the full potential of Digital Marketing.